When you incorporate machine learning techniques to speed up SEO recovery, the results can be amazing.

This is the third and last installment from our series on using Python to speed SEO traffic recovery. In part one,[1] I explained how our unique approach, that we call “winners vs losers” helps us quickly narrow down the pages losing traffic to find the main reason for the drop. In part two[2], we improved on our initial approach to manually group pages using regular expressions, which is very useful when you have sites with thousands or millions of pages, which is typically the case with ecommerce sites. In part three, we will learn something really exciting. We will learn to automatically group pages using machine learning.

As mentioned before, you can find the code used in part one, two and three in this Google Colab notebook[3].

Let’s get started.

URL matching vs content matching

When we grouped pages manually in part two, we benefited from the fact the URLs groups had clear patterns (collections, products, and the others) but it is often the case where there are no patterns in the URL. For example, Yahoo Stores’ sites use a flat URL structure with no directory paths. Our manual approach wouldn’t work in this case.

Fortunately, it is possible to group pages by their contents because most page templates have different content structures. They serve different user needs, so that needs to be the case.

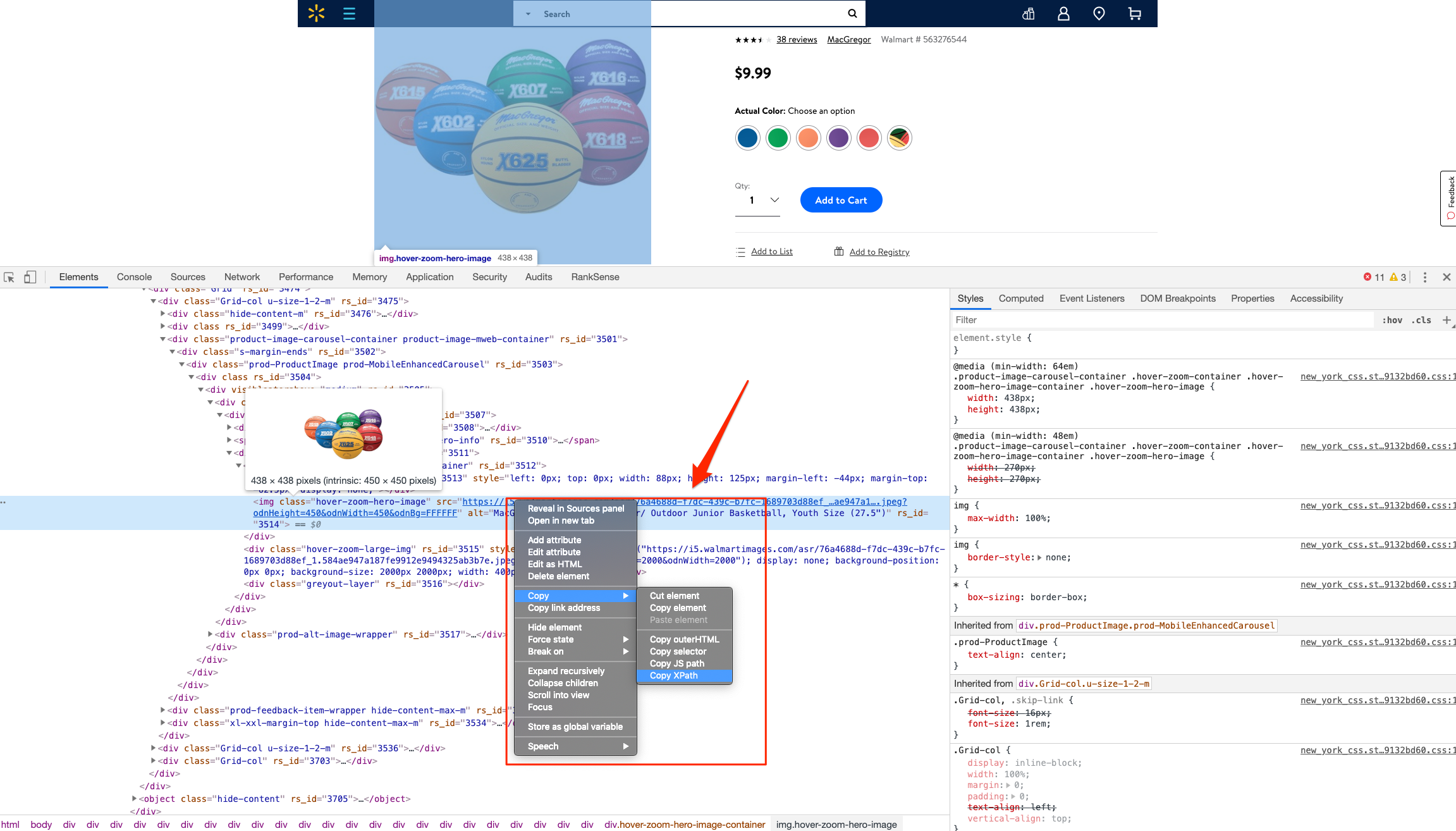

How can we organize pages by their content? We can use DOM element selectors for this. We will specifically use XPaths[4].

For example, I can use the presence of a